Multi-modal integration as predictions. The case of the McGurk Effect

Bodily interaction with the environment provide information from noisy different sensory modalities that are coherently integrated during bottom-up processes, which effectively reduce uni-sensory ambiguity, and let percepts to arise. Such process is known as multi- modal integration and it is an essential and necessary cognitive process to reduce environmental uncertainty, and to enhance perception. As a consequence, there is a wide line of research that tries to understand and model the factors involved in such process.

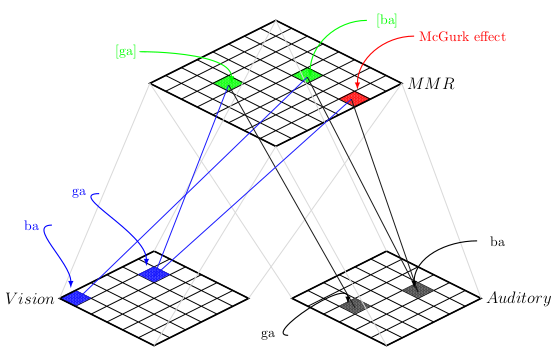

During speech perception we are constantly sensing multiple sources of information. As so, the McGurk effect has been typically conceived as a prototypical example of language multi- modal integration, specifically, it has been widely used to study speech and how audio-visual information is integrated during speech. The McGurk effect arise when incongruent syllable audio-visual stimuli is paired and is perceived as a different syllable (tipically, auditory /ba/ + visual /ga/ = percept “da” ).

We developed a biologically plausible self-organized hierarchical architecture, based on self- organizing maps (SOMs) and statistical learning to study speech multi-modal integration. Our architecture allows the study of the McGurk effect based purely on a bottom-up processing of audio-visual information and how this information is integrated and represented as a direct consequence of previously learned multi-modal associations.

For testing, we trained several architectures and measured the activation similarity between McGurk alike stimuli and audio-visual congruent ones. Our results suggest that the illusory percepts that is behaviorally reported when presenting incongruent audio-visual information are the best multi-modal congruent representation for reducing uncertainty.

The proposed model was able to successfully reproduce the illusory phoneme effect in means of model’s neural activation when presenting McGurk stimuli, although the results might not be in line with the classical behavioral findings. Our results lead to the suggestion that during speech perception the reliability of each sensory modality, as well as their integration, depends on previous multi-modal learned associations.